The $4.1 Billion Bug Nobody Caught

Extraction is solved. Verification isn't.

Last week I asked two systems the same question: What does Ares Capital’s portfolio look like?

One is a widely-used open-source tool that pulls SEC filings. The other is a deterministic extraction engine that runs tournament-style competition on document parsing.

Both returned BDC portfolio holdings from the same 10-Q filing. Same document. Same company. Same quarter.

The open-source tool returned 1,426 rows and $32.8 billion in fair value.

The tournament engine returned 1,383 rows and $28.7 billion.

That’s a $4.1 billion discrepancy. From the same filing.

where the money went

The open-source tool was double-counting.

BDC Schedule of Investments filings contain both individual holdings and company subtotals, summary rows that roll up the positions above them. The tool ingested both as separate positions. 121 rows were subtotals. 24 were exact duplicates of classified holdings. Three were pure metadata labels (”Largest Portfolio Company Investment”).

The tool didn’t distinguish between data and summaries. It treated every row as a holding and inflated the portfolio by $4.1 billion. Nobody caught it because there was no mechanism to catch it.

Here’s what it looks like side by side:

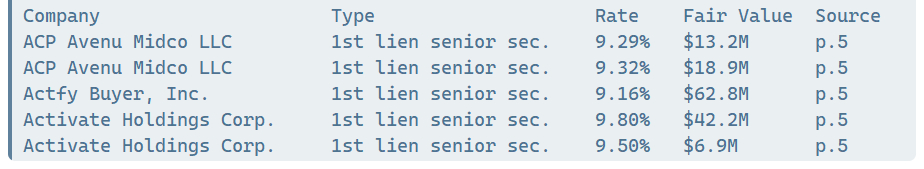

Tournament engine (first 5 holdings):

Open-source tool (first 5 rows):

121 “Unclassified” rows = $5.7B in phantom holdings

Totals:

Open-source tool: 1,426 rows, $32.8B fair value

Tournament engine: 1,383 rows, $28.7B fair value

Gap: 43 rows, $4.1B

the real problem isn’t extraction

This is a pattern I keep seeing. The conversation about AI in finance fixates on whether a model can extract the data. Can it read the PDF? Can it parse the table? Can it handle the footnotes?

Those are solved problems. Multiple tools can extract BDC holdings from SEC filings. Half a dozen vendors will sell you the output. ChatGPT can take a swing at it.

But extraction without verification is a liability. If you can’t prove the output is correct, if there’s no benchmark, no provenance, no receipt, you’re just trusting the pipeline. And the pipeline has bugs. $4.1 billion worth of bugs.

what verification actually looks like

The tournament engine’s approach is what changed my thinking on this. Multiple extraction strategies run against the same document in competition. Strategies compete. The one that scores highest against ground truth becomes the champion.

The reason this matters: no single extraction strategy is universally best. A strategy that handles BDC Schedule of Investments perfectly might choke on CLO waterfall tables. One that parses clean HTML filings flawlessly might hallucinate structure on scanned PDFs. The tournament doesn’t pick a winner once and declare victory. It applies competitive pressure per document type, per filing, per issuer. The best strategy for Ares Capital’s 10-Q might not be the best strategy for Prospect Capital’s. That’s fine. The tournament finds the right one each time and proves it with a score.

The output ships with an evidence pack:

The extracted data

Source document fingerprints

Airlock boundary proof (proving the raw document never entered the model directly)

Benchmark results (7,432 assertions for Ares Capital, zero failures)

The champion strategy’s identity and tournament generation

Every number traces back to a source page. Every claim is independently verifiable. You don’t trust the producer. You verify the evidence.

And those 7,432 assertions aren’t a one-time QA pass. They’re permanent benchmark infrastructure: deterministic, re-runnable, executed against every extraction, every time. When someone says “we tested it,” ask whether the test runs once or forever. That’s the line that separates verified data from an expensive spot-check.

the question that should follow every extraction

Once you have verified data with provenance, a natural question emerges: can other systems call this? Not as a static export sitting in someone’s S3 bucket, but as a live endpoint that returns structured, sourced answers on demand?

This is already happening. The tournament engine behind this analysis is live as a callable agent on Kovrex. You can query it for verified BDC holdings and get structured data back with a provenance receipt attached. The first holding (ACP Avenu Midco LLC, 9.29%, SOFR (Q), 5.00% spread) is on page 5 of the actual 10-Q filing. Go look. That’s what provenance means in practice.

curl -X POST https://gateway.kovrex.ai/v1/call/foundry-consult \

-H "Authorization: Bearer YOUR_KOVREX_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"operator": "cmd-rvl",

"intent": "discover",

"query": {

"document_family": "bdc_holdings",

"issuer": "ARCC"

}

}'Live issuers: ARCC, BXSL, PNNT. Agent prospectus: https://kovrex.ai/agents/foundry-consult

the lesson

When someone tells you they have AI that reads financial documents, ask them one question:

How do you know it’s right?

If the answer is “we tested it,” ask for the evidence pack. If there isn’t one, you don’t have verified data. You have an expensive guess.

The gap in capital markets AI isn’t extraction. It’s proof.

This week, BDC valuations hit 52-week lows. Private credit is under stress for the first time in years. When the market’s calm, a $4.1 billion data error is an embarrassment. When it’s stressed, it’s a risk management failure. The tools you use to value portfolios matter most when the portfolios are moving.