Everyone Is Wrong About How AI Disruption Actually Works

I replaced Salesforce in a weekend. Not the way you think.

Last week, Citrini Research published a speculative report modeling what happens when AI displaces white-collar jobs at scale. The S&P drops 38%. SaaS companies default on their debt. The mortgage market cracks. IBM fell 13% on Monday. Software stocks cratered. A thought experiment moved real markets.

The responses split into two camps, and both are wrong.

Camp one: “This is inevitable. AI will replace everyone.” They picture a company plugging an LLM into their operations and watching headcount evaporate. Drop it in, jobs go away, margins expand, repeat.

Camp two: “This is alarmist. AI can’t do real work.” They’ve tried ChatGPT, gotten mediocre results, and concluded the hype is overblown. Enterprise software is too complex. Real workflows are too nuanced. You can’t just replace Salesforce in a weekend.

I replaced Salesforce in a weekend.

But not the way either camp imagines.

The Part Everyone Gets Wrong

AI is not a “drop it into your company and jobs disappear” technology. Not yet. Maybe not ever in the way people picture it.

What AI is, right now, is a superhuman augmentation device for building things. And almost no one is talking about it that way.

Here’s an example. A senior economist asks Claude for the latest inflation rate of a specific CPI component. The figure is in the BLS report, but Claude didn’t find it directly. Instead, it tried to calculate the rate. It pulled the latest index level correctly from FRED, but instead of grabbing the level from 12 months earlier to compute the year-over-year change, it launched into a complicated estimation process and got the wrong answer.

His conclusion: LLMs have structural deficiencies. Their probabilistic nature makes them unreliable for anything requiring precision. As tasks get larger and more complex, the mistakes compound.

He’s right about the failure. He’s wrong about the lesson.

The right way to solve that problem isn’t “ask Claude for the inflation rate.” It’s “ask Claude to write a script that pulls the CPI time series from FRED’s API, computes the 12-month rate of change, and returns the answer.” That script takes 30 seconds to generate, runs perfectly every time, and doesn’t hallucinate because the math is deterministic code, not probabilistic generation.

That distinction is the whole game.

AI as oracle: unreliable. AI as builder: transformative.

When you ask an LLM to be a lookup tool or a calculator, you’re fighting its weaknesses. When you ask it to build a deterministic system that does the lookup and the calculation, you’re leveraging its strengths. The AI writes the tool. The AI doesn’t run the tool. The tool runs the tool.

This is what people miss. The superpower isn’t asking AI questions. It’s having access to the most brilliant coders in the world, available instantly, at any hour. But then marry that with the ability to understand a codebase, connect to a database, and reason about what a product is supposed to do. With this in place, the AI does not just write code, but understands the intent behind it.

That’s what changes everything. Not AI replacing workers. AI supercharging the people who know what needs to be built.

The Three Phases

I work in finance. We’d been on Salesforce for years. It stored data. It didn’t work the way we work. Our workflows don’t map to Salesforce’s Opportunity → Quote → Close model. They never did.

Here’s how I actually replaced it. Not magic. A repeatable process with three distinct phases.

Phase 1: Conversation

This is where most people stop, and it’s where the real leverage begins.

I use Projects in Claude pretty heavily. One project per domain. Pipeline management lives in one project. Product ideas live in another. When I want to think about a problem space, I go to the project that holds months of accumulated context about that space.

The project doesn’t need a codebase. It needs to understand everything you’re thinking about in this domain. Your workflows. Your frustrations. Your edge cases. The thing you’ve been meaning to fix for three years but never had the time or budget.

Over months of conversations about how we actually manage relationships, track deals, and move things through our pipeline, Claude developed a deep understanding of our domain. Not because I wrote a requirements document. Because I talked about my work the way I talk about it every day.

“Here’s how we track a deal from first conversation to signed agreement.”

“Here’s what it means when a relationship goes quiet for six months.”

“Here’s the difference between these two types of counterparties and why we manage them differently.”

Then I started a new chat within that project:

That single question, asked in a context that already understood our entire domain, produced something remarkable. Not just a feature list. Clickable TypeScript mockups. Data model diagrams. Workflow specifications. Edge cases I hadn’t considered. Features people have talked about for years but never articulated as requirements because I’d internalized Salesforce’s limitations as my own.

The result wasn’t a cheaper version of Salesforce. It was something Salesforce could never be: a system shaped entirely around how we actually work, with every component connected the way our business thinks, not the way a SaaS vendor’s product team decided it should work.

The output of Phase 1 isn’t code. It’s the most detailed, domain-aware specification you’ve ever seen, produced by the person who knows the domain best, refined through conversation with something that can organize, challenge, and extend your thinking.

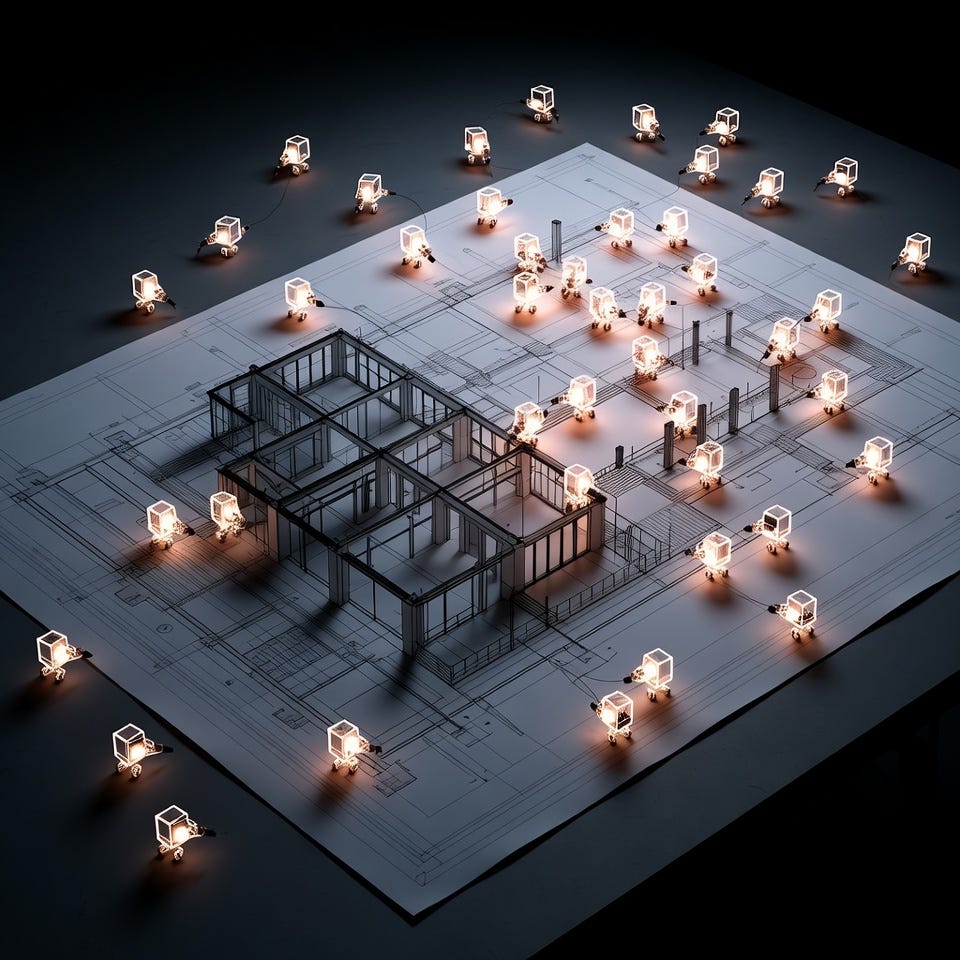

Phase 2: The Agent Swarm

There are several tools for this now, but I’m using Overstory at the moment. The concept is the same across all of them: parallel AI coding agents working simultaneously on a coordinated build.

You take the spec from Phase 1. Break it into discrete units of work. Give the swarm a clean git repo. And go.

The swarm isn’t one agent writing code sequentially. It kicks off with a coordinator that manages the overall architecture. A lead agent reviews the specifications and parcels out multiple parallel streams of work. Coder agents execute on each stream. When they make mistakes, tester agents catch them. Review agents validate quality. Merge agents understand how the parallel codebases fit back together.

It’s a full development team, running in parallel, for the cost of API credits.

This obviously depends on good specs. But this is exactly why Phase 1 matters so much. Months of domain conversation, refined into clear specifications, fed into a system designed to execute on exactly that kind of input. The better the thinking in Phase 1, the cleaner the output from Phase 2.

The result: a full-stack web application with a proper database, custom pipeline funnels mapped to how we actually work, contact and organization management, email ingestion, and relationship scoring. 30+ database tables. Certainly not every feature Salesforce has. But definitely every feature we actually need

About $30 in API credits. Push to the repo with the original specs attached so that any subsequent agent touching this codebase understands not just what the code does, but what it was meant to do and why.

Phase 3: The Last Mile

Pull the codebase down locally. Open Cursor or Claude Code. Get the app running.

For a simple environment, it often works immediately. For real enterprise deployment (AWS, Azure, CI/CD pipelines, single sign-on, managed services) it takes some tweaking. But this is where the magic of having an intelligent agent sitting in your editor becomes obvious.

Opus for complex reasoning and data migration. Codex for deep codebase understanding. Composer for speed. It’s lightning fast at fixing deployment configurations, tweaking git actions, adjusting UI components. They all have strengths and weaknesses, and part of getting good at this is knowing which model to reach for. And, to be honest, by the time you read this, the versions and names of those specific models probably will have changed.

Data migration is the thing that skeptics say can’t be done. We had a full dump of our entire Salesforce database. Tons of contacts. Years of data accumulated across different teams, different processes, and multiple iterations of how the organization worked.

The old way: an eight-week consulting engagement. A team of people mapping fields, cleaning records, testing edge cases, validating relationships.

What actually happened: I gave the dump to Opus alongside the new codebase. “How do we migrate this without creating duplicate contacts, duplicate companies? And that tagging system we always wanted to implement in Salesforce but never did because it was too much manual work? Do that too.”

One hour. The model analyzed the entire dataset, filtered out dead contacts and system noise, mapped the rest to the new schema, preserved relationships and pipeline stages, and implemented the tagging logic we had talked about for years.

$15 in API credits.

The Math That Breaks Everything

A traditional Salesforce-to-custom-CRM migration runs into six figures and can take at least six months with a systems integrator for a relatively small implementation.

You could do it today for $50 in API credits and a weekend.

We were paying five figures a year for Salesforce. The replacement cost less than a single day of that subscription. If a five-figure annual subscription can’t survive a $50 weekend project, imagine what happens to the six-figure contracts that are the real revenue drivers for enterprise SaaS.

That’s the math Citrini is modeling. Not “AI takes your job.” AI gives domain experts the ability to build exactly what they need, for almost nothing, faster than anyone thought possible.

Why Both Camps Are Wrong

Camp one imagines AI as a job-replacement machine. Plug it in, headcount drops, repeat. That misunderstands the mechanism. What’s actually happening is that the people who understand the work are gaining the ability to build their own tools. That’s not “AI replacing workers.” That’s the entire build-vs-buy equation inverting because the build side just got four orders of magnitude cheaper.

Camp two imagines AI as a slightly better autocomplete. They’ve asked it questions, gotten wrong answers, and concluded the technology is fundamentally limited. Like the economist and his inflation query, they’re testing AI as an oracle and finding it unreliable. They’re not wrong about that. They’re wrong about what it means. The three-phase process (deep domain conversation, specification generation, coordinated agent execution, intelligent last-mile refinement) doesn’t ask AI to be right. It asks AI to build things that are right. It’s a workflow, not a prompt. And the systems it produces are deterministic, not probabilistic.

The disruption isn’t “AI writes code.” The disruption is “domain experts can now build production systems that fit their exact needs, and the cost of doing so is essentially zero compared to the SaaS alternative.”

What Happens Next

The Citrini report projects SaaS defaults starting in 2027 as recurring revenue stops recurring. Their timeline might be aggressive. The mechanism is not.

Every company with a slightly unusual workflow (and in financial services, that’s all of them) is one motivated domain expert away from discovering that the software they’ve been renting doesn’t need to be rented.

It won’t happen all at once. Enterprises move slowly. Procurement cycles are real. But the pattern I just described doesn’t go through procurement. It starts as one person solving their own problem on a weekend. Then their team adopts it because it actually works. Then the renewal conversation becomes “we already built something better.”

Shadow IT just became the most capable engineering team in the building.

I’m not predicting the S&P drops 38%. I’m reporting something simpler: the core assumption behind enterprise SaaS valuations (that custom software is too expensive for most companies) is already false. The three-phase workflow that makes it false is learnable, repeatable, and gets more powerful with every model generation.

The Citrini thesis is not fiction. It’s a description of what happens when enough people figure out what I figured out last weekend.

The question is how fast that spreads.

Great read. Couldn't agree more on the Oracle vs Builder framing.